We have been using plenty of different tools for tracking bugs/product management/project management/to do lists/code review; such as ClearCase, ClearQuest, Bugzilla, Github, Asana, Pivotal Tracker, Google Drive etc. We found Phabricator as a “Too Good To Be True” software engineering web application platform originally developed at Facebook. It has code review, wiki, repository browsing,tickets and a lot more to make Phab more fabulous.

Phabricator is an open source collaboration of web applications which help software companies to build better software. It is a suite of applications. Following are the most important tools in phabricator :

Maniphest – Bug tracker/task management tracker

Diffusion- source code browser

Differential – code review tool that allows developers to easily submit reviews to one another via command line tool when they check in code using Git or Subversion

Phriction – wiki tool

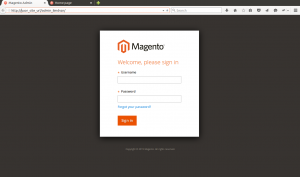

How to setup and configure the code review and project management tool – Phabricator

Installation

Server – 4GB Digital ocean droplet

OS – Ubuntu 14.04

1. Install dependencies

apt-get install mysql-server apache2 dpkg-dev php5 php5-mysql php5-gd php5-dev php5-curl php-apc php5-cli php5-json

2. Get code

#cd /var/www/codereview

git clone https://github.com/phacility/libphutil.git

git clone https://github.com/phacility/arcanist.git

git clone https://github.com/phacility/arcanist.git

3. Configure virtual host entry

#add below lines

#######################################################################

DocumentRoot /var/www/codereview/webroot

RewriteEngine on

RewriteRule ^/rsrc/(.*) – [L,QSA]

RewriteRule ^/favicon.ico – [L,QSA]

RewriteRule ^(.*)$ /index.php?__path__=$1 [B,L,QSA]

Order allow,deny

allow from all

#######################################################################

4. Enable the virtual host entry for phabricator.

# a2ensite phabricator.conf

# service apache2 reload

5. Configure the MySQL database configuration for phabricator

– create database

# /var/www/codereview/phabricator/bin/config set mysql.user mysql_username

# /var/www/codereview/phabricator/bin/config get mysql.pass mysql_password

# /var/www/codereview/phabricator/bin/config get mysql.host mysql_host

# /var/www/codereview/phabricator/bin/config storage upgrade

-tweak mysql

Open /etc/mysql/my.cnf and add the following line under [mysqld] section:

sql-mode = STRICT_ALL_TABLES

#service mysql restart

Set the Base URI of Phabricator install

# /var/www/codereview/phabricator/bin/config set phabricator.base-uri

(eg: phabricator.your-domain.com)

Configure Outbound Email – External SMTP (Google Apps)

Set the following configuration keys using /var/www/codereview/phabricator/bin/config set value

– metamta.mail-adapter -> PhabricatorMailImplementationPHPMailerAdapter

– phpmailer.mailer -> smtp

– phpmailer.smtp-host -> smtp.gmail.com

– phpmailer.smtp-port -> 465

– phpmailer.smtp-user -> Your Google apps mail id

– phpmailer.smtp-password -> set to your password used for authentication

– phpmailer.smtp-protocol -> ssl

Start the phabricator daemons

You can start all the phabricator deamons using the script

# /var/www/codereview/phabricator/bin/phd start

To start daemons at the boot time, add this entry to the file /etc/rc.local

/var/www/codereview/phabricator/bin/phd start

Diffusion repository hosting with git

1. Install git

#apt-get install git

2. Create a local repository directory:

#mkdir -p /data/repo

3. Edit the repository.default-local-path key to the new local repository directory.

Go to the Config -> Repositories -> repository.default-local-path

4. Configure System user accounts

Phabricator uses as many as three user accounts. These are system user accounts on the machine Phabricator runs on, not Phabricator user accounts.

* daemon-user – The user the daemons run as

We will configure the root user to run the daemons

* www-user – The user the web server run as

We will use www-data to be the web user

* vcs-user – The user that users will connect over SSH as

We will configure git user to the vcs-user

To enable SSH access to repositories, edit /etc/sudoers file using visudo to contain:

#includedir /etc/sudoers.d

git ALL=(root) SETENV: NOPASSWD: /usr/bin/git-upload-pack, /usr/bin/git-receive-pack, /usr/bin/git

Since we are going to enable SSH access to the repository, ensure the following holds good.

– Open /etc/shadow and find the line for vcs-user, git.

The second field (which is the password field) must not be set to !!. This value will prevent login. If it is set to !!, edit it and set it to NP (“no password”) instead.

– Open /etc/passwd and find the line for the vcs-user, git.

The last field (which is the login shell) must be set to a real shell. If it is set to something like /bin/false, then sshd will not be able to execute commands. Instead, you should set it to a real shell, like /bin/sh.

– Use phd.user as our daemon user;

# /var/www/phab/phabricator/bin/config phd.user root

# /var/www/phab/phabricator/bin/config set diffusion.ssh-user git

5. Configuring SSH

We will move the normal sshd daemon to another port, say 222. We will use this port to get a normal login shell. We will run highly restrictive sshd on port 22 managed by Phabricator.

Move Normal SSHD

– make a backup of sshd_config before making any changes.

#cp /etc/ssh/sshd_config /etc/ssh/sshd_config.backup

– Update /etc/ssh/sshd_config, change the port to some othert port like 222.

Port 222

– Restart sshd and verify that you are able to connect to the new port

ssh -p 222 user@host

Configure and start Phabricator SSHD

We now configure and start a second SSHD instance which will run on port 22. This instance will use special locked down configuration that uses Phabricator to handle the authentication and command execution.

– Create a phabricator-ssh-hook.sh file

– Create a sshd_phabricator config file

– Start a copy of sshd using the new configuration

Create phabricator-ssh-hook.sh: Copy the template in phabricator/resources/sshd/ phabricator-ssh-hook.sh to somewhere like /usr/lib/phabricator-ssh-hook.sh and edit it to have the correct settings

##############################################################

#!/bin/sh

# NOTE: Replace this with the username that you expect users to connect with.

VCSUSER=”git”

# NOTE: Replace this with the path to your Phabricator directory.

ROOT=”/var/www/codereview/phabricator”

if [ “$1” != “$VCSUSER” ];

then

exit 1

fi

exec “$ROOT/bin/ssh-auth” $@

##############################################################

Make it owned by root and restrict editing;

#sudo chown root /usr/lib/phabricator-ssh-hook.sh

#chmod 755 /usr/lib/phabricator-ssh-hook.sh

Create sshd_config for Phabricator: Copy the template in /phabricator/sshd/sshd_config.phabricator.example to somewhere like /etc/ssh/sshd_config.phabricator

Start Phabricator SSHD

#sudo /usr/sbin/sshd -f /etc/ssh/sshd_config.phabricator

Note:-

Add this entry to the /etc/rc.local to start the daemon on startup.

If you did everything correctly, you should be able to run this;

#echo {} | ssh git@phabricator.your-company.com conduit conduit.ping

and get a response like this;

{“result”:”phab-server”,”error_code”:null,”error_info”:null}

You should now be able to access your instance over ssh on port 222 for normal login and administrative purposes. Phabricator SSHD runs on port 22 to handle authentication and command execution.

6. To create a git repository

Go to Diffusion -> New Repository -> Create a New Hosted Repository

Upgrade Phabricator

Since phabricator is under development, you should update frequently. To update phabricator:

– Stop the web server

– Run git pull in libphutil/, arcanist/, and phabricator.

– Run phabricator/bin/storage upgrade.

– Restart the web server.

Also you can use a script similar to this one to automate the process:

http://www.phabricator.com/rsrc/install/update_phabricator.sh